The landscape of British education has undergone a seismic shift. As we navigate 2026, the question is no longer whether Artificial Intelligence belongs in the classroom, but how it can be harnessed to enhance human intelligence rather than replace it. From the lecture halls of the Russell Group to primary schools in Manchester, AI is now a “near-universal” presence in UK academia.

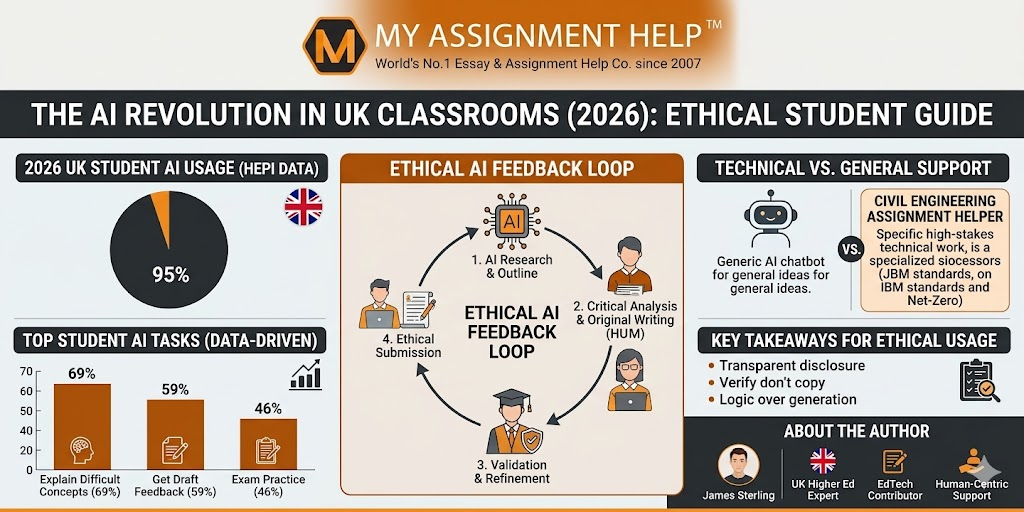

According to the 2026 Student Generative AI Survey (HEPI Report 199), a staggering 95% of UK undergraduates now report using AI tools in at least one aspect of their studies. However, with this rapid adoption comes a critical responsibility: maintaining academic integrity in an era of automated brilliance.

The Shift from “Cheating” to “Co-Piloting”

In 2024, the initial reaction to tools like ChatGPT was often one of restriction. By 2026, the narrative has matured. The UK Department for Education (DfE) recently hosted an international summit on generative AI, emphasizing a “test-and-learn” approach. The goal is to move students away from using AI as a “ghostwriter” and toward using it as a sophisticated “tutor.”

Ethical AI usage in 2026 focuses on Information Gain. This means using technology to explain complex theories, brainstorm structures, or debug code, while the final analytical output remains distinctly human. For many students balancing heavy workloads, finding a reliable assignment helper in the UK has become a bridge between raw AI data and polished, high-distinction academic writing that adheres to strict university rubrics.

Data-Driven Insights: How the UK Uses AI in 2026

Recent data from Save My Exams reveals that 79% of UK students believe AI has directly improved their understanding of difficult topics. The breakdown of usage illustrates a shift toward high-level cognitive support:

- 69% use AI to explain difficult concepts.

- 59% seek instant feedback on their drafts.

- 46% use it to generate practice exam questions.

However, the “digital divide” remains a concern. While 87% of students utilize free versions of ChatGPT, those with access to premium, institution-aligned tools often see a 62% increase in test scores, as noted in recent education impact reports.

Read also: How Artificial Intelligence Is Revolutionizing Business Processes

High-Stakes Precision: The Case of Engineering

While AI is excellent at summarizing history or suggesting creative metaphors, it often falters in high-precision technical fields. In the UK, where infrastructure safety and “Net-Zero” targets are legally mandated, there is zero room for AI “hallucinations.”

Civil engineering students, for instance, are finding that while AI can suggest design concepts, it lacks the nuanced understanding of the Joint Board of Moderators (JBM) standards or the specificities of UK building regulations. This is why specialized civil engineering assignment help remains indispensable. Whether it’s calculating the structural integrity of a digital twin or drafting a carbon-neutral infrastructure report, the human-expert-in-the-loop is the only way to ensure 100% accuracy and ethical compliance.

Key Takeaways for Students in 2026

- Transparency is Mandatory: Always disclose AI usage in your bibliography if required by your institution’s 2026 guidelines.

- Verify, Don’t Copy: Treat AI output as a “first draft” or a “search result,” never as the final submission.

- Focus on Logic: Use AI to handle repetitive tasks so you can focus on the “Critical Analysis” sections that carry the most marks.

- Use Specialized Support: For technical subjects like Engineering or Nursing, rely on human subject matter experts who understand UK-specific regulations.

Frequently Asked Questions (FAQ)

Q: Is using AI considered plagiarism in UK universities in 2026?

It depends on the institution. Most UK universities, following the Russell Group Principles, allow AI for brainstorming and research but consider the submission of AI-generated text as “contract cheating” or academic misconduct.

Q: How do UK universities detect AI-generated content now?

Detection tools have evolved, but the primary method is now “Stylometric Analysis,” where examiners look for shifts in a student’s personal writing voice compared to the “flat” tone of generative AI.

Q: What is the “Ethical AI” framework?

It is a set of guidelines ensuring AI is used for augmentation (helping you learn) rather than substitution (doing the work for you).

About the Author

James Sterling is a Senior Academic Consultant at MyAssignmentHelp. With over a decade of experience in UK higher education and content strategy, James specializes in helping students navigate the intersection of emerging technologies and academic integrity. He is a frequent contributor to UK EdTech forums and focuses on ensuring that academic support remains human-centric, data-driven, and fully compliant with TEQSA and JBM standards.

Sources & References

- Save My Exams (March 2026): “AI in Education Statistics: Insights from 1,500+ UK Students.”

- Higher Education Policy Institute (HEPI) Report 199: “Student Generative Artificial Intelligence Survey 2026.”

- UK Department for Education (2026): “Using AI and technology in education to improve pupil outcomes.”

- Russell Group (2025/26 Update): “Principles on the use of generative AI tools in education.”